How to Design Experiments for Your Product

Why changing button colors isn’t enough

Google Jedi Marissa Mayer allegedly tested multiple variations of the color blue until she found the perfect shade of blue to increase conversions at Google. This kind of experimentation may be reasonable when you have tens of millions of visitors a day and can achieve statistically significant results in seconds, but many of us don’t.

Experiments can provide valuable insights for your product. However, many companies run experiments for the sake of running experiments without gaining any real insights which propel the product forward or achieve any interesting, insightful results. Merely uttering the words ‘A/B test’ is sometimes seen as an achievement in itself but without truly learning anything from your test, testing can be a waste of time and precious development effort.

In order to learn from your experiments, you need to spend time upfront creating experiments that will deliver conclusive results at the end. Changing button colors may give you incremental improvements but if you’re not Google or you don’t have the resources to conduct hundreds of concurrent experiments at the same time, you’re probably better off focusing on a few distinct experiments which answer interesting questions and provide you with real, tangible insights that can drive long term improvements or achieve your product goals.

How experiments fit into the overall picture

Product managers are busy people. And there’s a temptation to run experiments to merely demonstrate to people in your organisation that you’re running experiments. This is mostly a waste of your time. You’re better off not running any experiments than wasting precious planning / development time on irrelevant, vanity experimentation.

Since we’re such busy folks, experiments should be run as genuine pieces of scientific, investigative work to try and find insights into problems and answers to interesting questions which help you to achieve your goals.

Experiments should supplement not supplant your product OKRs, goals and roadmaps. They are a tool which assist you along the path to achieving goals and have little inherent value to your users by themselves.

Your OKRs, backlog and roadmap remain your focus and delivering a product which your customers love remains your primary purpose as a product manager.

Armed with results from a genuinely insightful experiment, you can inform your roadmap, your team and your stakeholders and reassess your goals with the insights you’ve gained.

For startups, your entire business is an experiment. You need to use experimental discovery methods and processes to find product / market fit. Often, startups do not have the traffic required to generate results that are statistically significant in any meaningful way so if you’re a product manager working at a startup it may be best to steer away from optimisation experiments such as changing text and button colors and instead focus on your overall goals and experimenting with your value proposition itself to ensure you’re delivering value and building a viable product.

For larger corporates, experimentation can be large and small scale at a departmental level and at a board level. Large companies have the luxury to experiment on a massive scale and test entire end to end products like Amazon Dash or Echo. Similarly, they also have the ability to conduct micro experiments at the departmental level. If you’re a product manager who is not operating at the board level, you’ll most likely be working on smaller scale experiments to optimise your product.

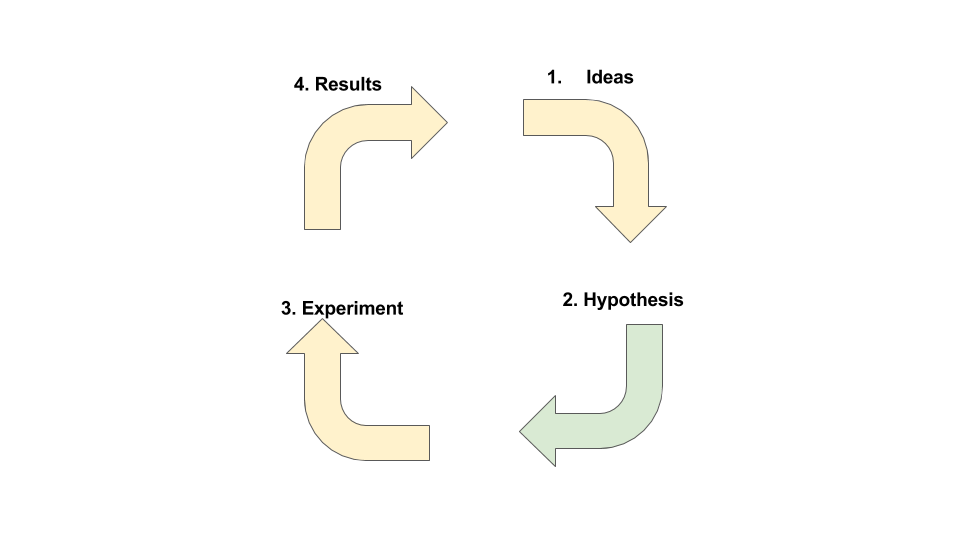

How to conduct experiments

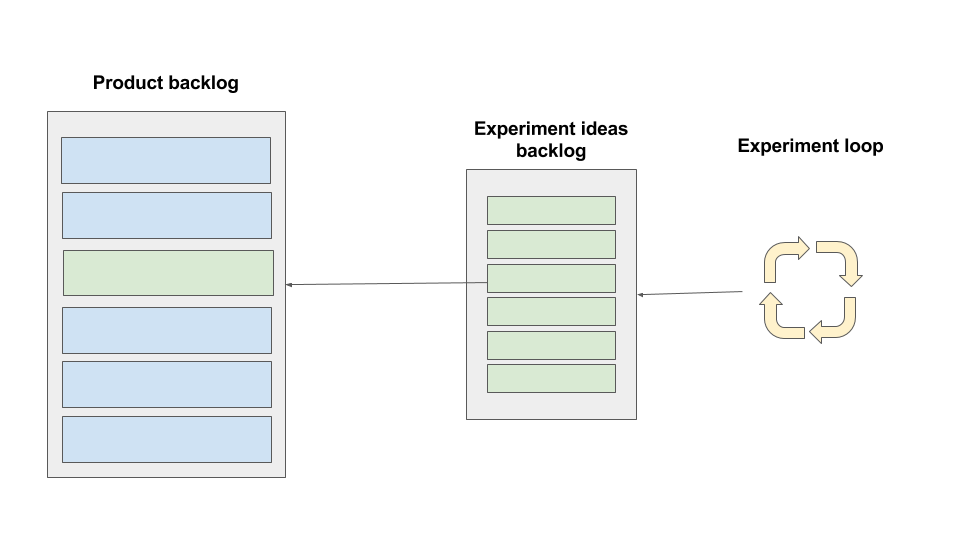

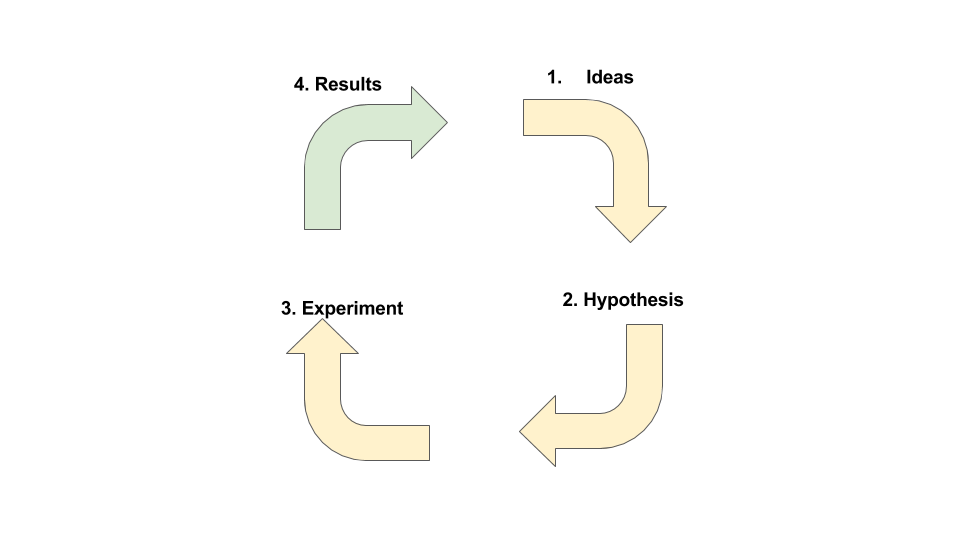

There are several steps involved in the process of designing, prioritising, conducting and implementing experiments and the results of experimentation.

- Ask questions and generate ideas

- Create a testable hypothesis

- Conduct your experiment

- Communicate your results

- Prioritise your results

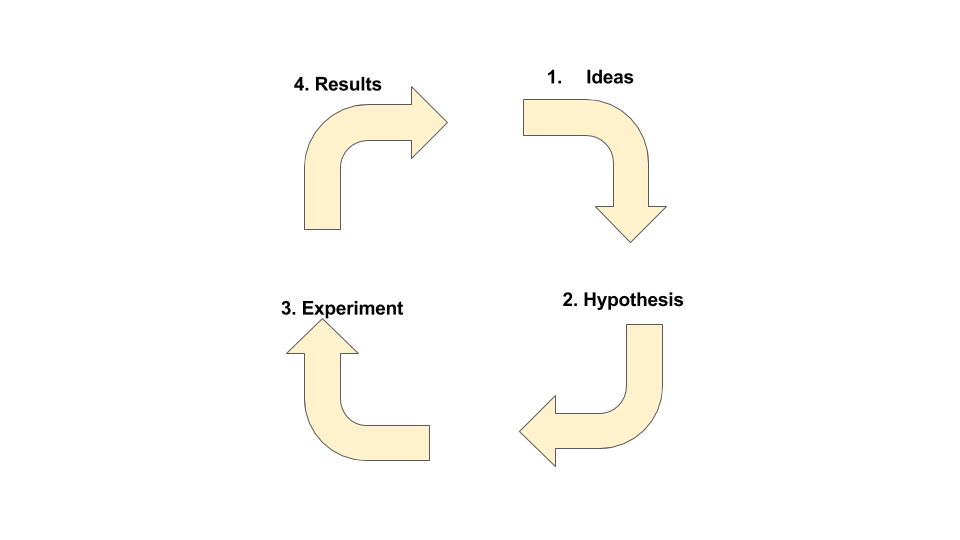

1. Ask questions and generate ideas

All experiments start with a question. Your experiment is an attempt to answer your question through a series of hypotheses.

The question you ask should be linked to your product goals. For example, if your goal is to achieve a 10% visit to lead conversion rate on your sign up page, you could ask the question ‘what can we do to change the sign up form to increase the conversion rate?’.

If a product goal in an ecommerce product is to generate 15% more revenue year on year, your question could be ‘what is the best way to increase the average order value per customer from $20 to $30?’.

Questions are a form of ideation and which help to create hypotheses which can be tested.

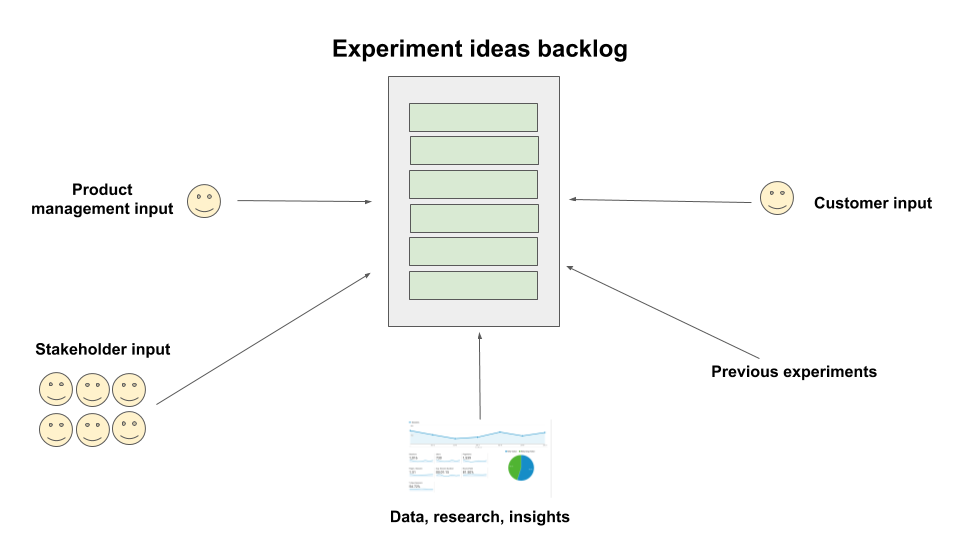

The questions and ideas that you’re interested in exploring should ideally come not only from your own head but also from your stakeholders from across the business.

As a product manager, you’ll no doubt be in constant communication with many stakeholders across the business who (hopefully) know what’s on the roadmap and why.

How to involve stakeholders

If you do decide to conduct experiments, make sure you involve stakeholders at this stage and get them involved in the process of generating hypotheses by asking questions that are relevant to both them and to your product goals.

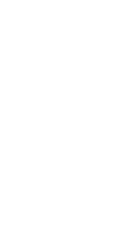

A simple way to do this is to create an experiment backlog which can either be a spreadsheet accessible by all or a Trello board where stakeholders can dump their ideas quickly and efficiently.

Decide on a structure for your experiment backlog and communicate the structure clearly to all your stakeholders. Make it clear that the structure must be adhered to for the experiment to be prioritised / understood effectively.

Stakeholders are busy too so ensure the structure you decide upon is easily understood by everyone. If you make the process of suggesting an experiment difficult you’re already setting yourself up for a fail. Keep it simple.

With a backlog prepared, you’ll need to prioritise.

Once your stakeholders have been involved in the idea generation and question generation process, you will then need to prioritise which questions / ideas to take forward.

You may have your own methods for prioritising experiments and there is no 1 definitive answer on the best methods for prioritising experiments for your product.

Here are some methods to help you prioritise your experiment ideas.

- Impact vs effort – if you find out the answer to this question or you discover that this idea is one that should be implemented, what is the impact of this? What material change will happen as a result of this? Impact for your product may be different to impact for another. Be specific and attempt to articulate the best and worst case outcomes. In addition to the impact, consider the technical challenges or effort involved in implementing the experiment or indeed the final changes if the experiment itself can be done in light touch ways.

- OKRs – how do these experiment ideas / questions link to your company or product objectives and key results (OKRs). If you don’t use the OKR framework, how do these experiment ideas or questions help you to achieve your short term and long term goals? Do they help you to achieve your goals? What will happen as a result of discovering the answer to these questions and how would your roadmap change? If the potential experiments don’t help you achieve your goals, why would you prioritise them?

You’ll no doubt use similar prioritisation frameworks for prioritising your overall backlog, so use the same principles here. As I said, much of this is guesswork since that’s how scientific experimentation works, but try your best to put together a prioritisation process that works for you.

Involve stakeholders at this stage if you think it’s appropriate. Work with stakeholders to determine which experiments to tackle. If needs be, get everyone together on a call or in a meeting so that you can discuss which of the ideas will be prioritised first.

Whilst stakeholders should have a say in what goes into the backlog, the product manager should make the final decision on prioritisation. Stakeholders will be more interested in the prioritisation of your roadmap and less interested in your experimentation backlog so if you think involving them in this stage might just confuse things, just crack on yourself and ping around a note instead to explain which experiments will be prioritised and why.

2. Create a hypothesis

If you’re not from a particularly scientific background (and many product managers are not), you might feel slightly uncomfortable with some of the language bandied about around when creating experiments.

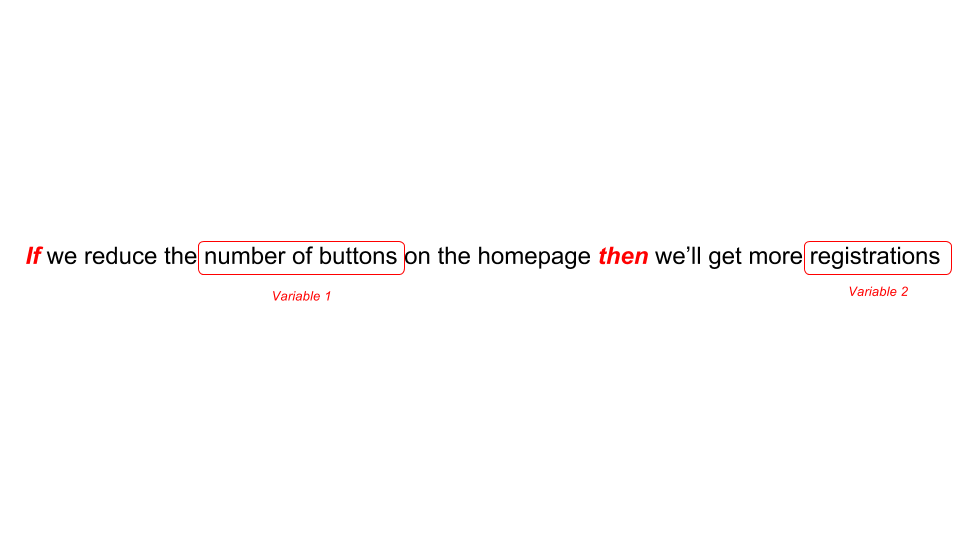

The most important term to understand fully is the concept of a hypothesis. A hypothesis is a testable statement which can be proved or disproved. It’s an educated guess or a prediction about the relationship between 2 variables.

There are a number of ways you can create a hypothesis using general statements ,analysing non-directional, directional, causal and relational propositions but unless your role is that of a full time data scientist and taking into consideration that we’re all busy bees I’d recommend sticking to the simplest way of creating hypotheses which is to use the ‘if then’ statement.

What’s an if then statement? Let’s look at some examples.

- If we reduce the number of buttons on the homepage then we’ll get more registrations

- If we make the signup form responsive then we’ll get an uplift in registrations on mobile devices

- If we decrease the price of our starter package then we’ll get more customers

The if then statements clearly articulate your hypothesis and your prediction. In these examples we have 2 variables which will allow you to create your experiment. Variable 1 is known as the independent variable – since it’s not dependent on the other. The variable 2 is known as the dependent variable, since if you change the independent variable, this will change too. It is dependent on the independent variable.

One way to know whether you’ve created a strong hypothesis is to distill your hypothesis down into the 2 variables that will affect the outcome of your experiment.

Let’s look at an example again.

If we reduce the number of buttons on the homepage then we’ll get more registrations

The 2 variables in this statement are the number of buttons on the homepage and the number of registrations. Your hypothesis is suggesting that the number of registrations is dependent upon the number of buttons on the homepage and is therefore the dependent variable.

You can also add a ‘because’ clause to your if statements to article the rationale which supports your hypothesis. An example could be:

If we reduce the number of buttons then we’ll get more registrations because there is only 1 choice for the user to click on

You don’t have to use an ‘if then’ statement to form your hypothesis if you don’t want to; so long as your hypothesis is a statement which has variables that can be measured in experiments your hypothesis will still be valid.

At this point, you will have generated ideas and interesting questions to ask and created some hypotheses on the basis of those questions.

For example, you may start by asking this question:

Why is our registration rate only 0.5% on the homepage?

This results in a number of hypotheses:

- If the button was blue then we’d increase registrations

- If there was only 1 button on the homepage then we’d increase the registration rate because the user would only have 1 choice

- If there was a pop up form then we’d increase registrations because we’ve seen in previous experiments that pop up forms have a conversion rate of 10%

Once you have a list of hypotheses, these will then also need to be prioritised. You cannot and should not attempt to test every possible hypothesis. As with prioritising ideas / questions, there is no 1 single framework to use for prioritising hypotheses. You should consider:

- Impact – What is the impact on your goals if your hypothesis is proven correct / incorrect?

- Evidence – What evidence do you have to support your hypothesis? You may have previous experiment data, industry insights, reports or other evidence to support your hypothesis.

Once you decide on a hypothesis to test, you’ll then start the fun of actually conducting the experiment.

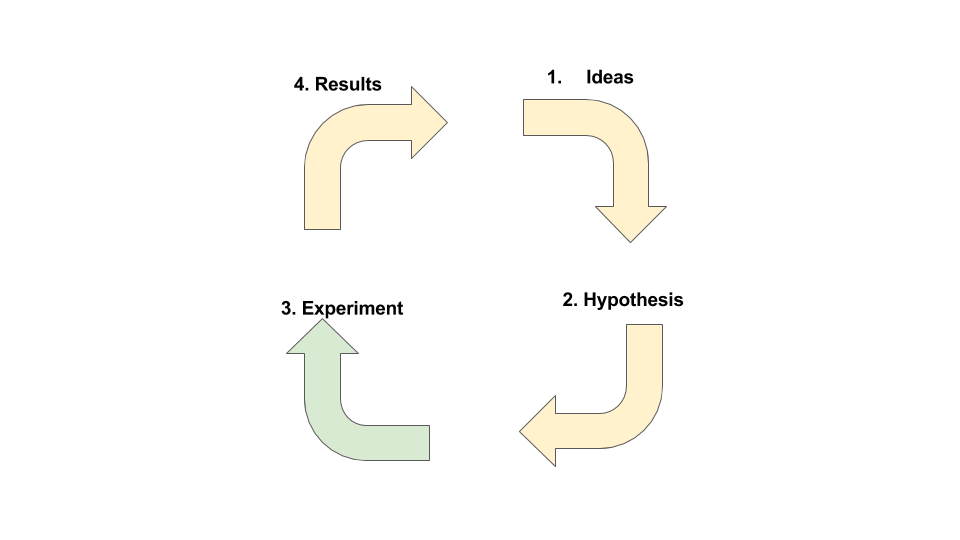

3. Conduct your experiment

There are 2 common ways to run product experiments:

- A/B testing

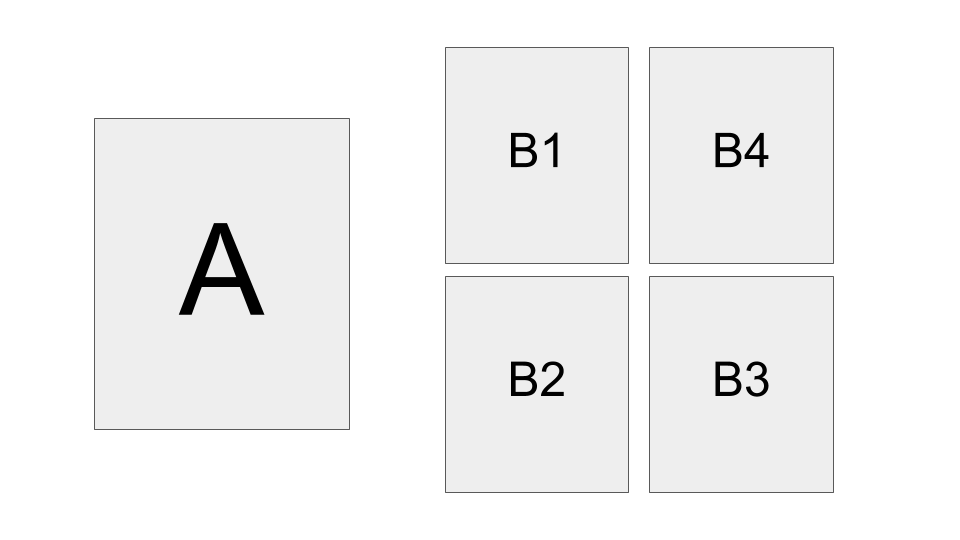

- Multivariate testing

There are of course, plenty of other ways to run experiments, but these are the 2 most common so we’ll stick to these.

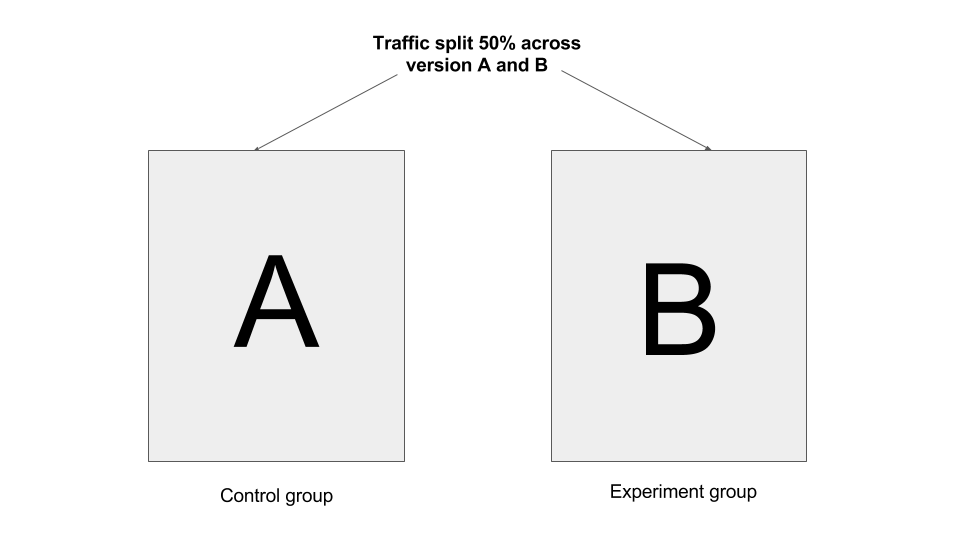

A/B testing involves 2 variations of the same page or feature: version A and version B. Multivariate testing involves testing multiple variations of the same page to identify which is the best performing.

Your experiment will consist of a control group, which is the group of people exposed to your existing page, and an experiment group(s) which is the group of people exposed to a new version or versions of your page or feature. The new version of will be created with your hypothesis in mind.

Aim to be as bold as you possibly can be with your experiment. Don’t always go for incremental, small changes that will result in 1 or 2% uplifts. Try completely changing the layout of your page; remove elements, add elements and be quite drastic to try and achieve drastic results.

You may find some resistance internally to experimenting with bold changes but in order to achieve radical results you often have to try radical experiments. Changing button colors alone is fine – and safe – if you’re looking for small optimizations across high volume sites but it’s through bold experimentation that you’ll learn more.

Let’s look at an example.

Your experiment would test your hypothesis and at the end of your experiment you would have a result. Your result would tell you whether your hypothesis was true or not.

There is a downside to running bolder experiments, which is that bold experiments mean more time, effort and resource since it’s not as simple as just changing a shade of button. If you do decide to run an experiment, it is worth throwing some resource behind putting together a real alternative to the current solution.

Your backlog and the features you build are all essentially experiments; you do not know what will happen when you build a new feature and it only through building the lightest touch version of what you believe to be a good idea that you ever find out whether it is or not. Whilst your stakeholders might be reluctant to throw time and effort into experimentation, remind them that everything you build is, to some extent, an experiment.

Some companies have their own experimentation platforms or indeed separate experimentation teams, which is nice.

If you don’t have the luxury of your own custom built experimentation platform here’s a selection of options to use for conducting your experiments:

- Optimizely – perhaps the most widely known experimentation platform, Optimizely only needs a javascript snippet to run. It has a detailed reporting dashboard and a WYSIWYG editor which makes making changes simple.

- Google Analytics – allows you to set up experiments for free. Fab.

- Omniture – a paid for analytics tool which has experiment functionality built in

- Traffic driven marketing – splitting your advertising budget across 2 different landing pages is a light weight approach to running experiments

- In house traffic splitting – whilst you might not have the time or resource to build your own experimentation platform, speak to your engineers anyway to see if there’s a light touch, creative way to split certain traffic across 2 different pages.

- Feature flagging – sometimes your engineers can enable a feature flag for a specific feature and enable it only to a specific percentage of your audience. This helps you to experiment with or test a feature before it goes live.

The key is to ensure you can measure all the metrics that matter to this experiment, which are typically one of the variables in your hypothesis. If you’ve created an experiment but you aren’t able to measure it, it isn’t an experiment. Make sure the tools you use to create your experiment allow you to measure its results!

How to test ideas without too much development effort

To reduce potential waste, try your best to keep the experiment as lean as possible, without dragging resources away from other important pieces of work to build things that might not actually work.

Some tips on how to do this include:

- MVP – don’t strive for absolute perfection. If your designer is stacked with other work, explain that a minimum viable version of your experiment version is acceptable.

- Pick low effort items – if times are hard and your team are going through a busy period, pick low effort items to test rather than items you know will take a lot of dev effort. For example, if you’re a PM working on an ecommerce product, testing a new free delivery method might mean making a small change in your admin panel that can be done by you – bypassing the dev team entirely. If you’re going through a particularly busy period, picking low effort items to test can be worth it – or it may be worth parking experiments for the time being until you’re out of your busy period.

- Use prototypes – rather than building a real version of the feature you’re testing, use a flat, clickable image to test the proposition instead. For example, you could design an entirely different version of a landing page as a clickable image and direct users to the real version of the site whenever they click on an element. Yes, it’s a little clumsy, but you’ll often get the data you need without having to build it all up front.

4. Communicate your findings and close the loops

Once your experiment demonstrates tangible, statistically significant results, it’s no use keeping the findings to yourself. Go back to your stakeholders and the wider business and articulate clearly what the result of the experiment was and what the next steps could be.

Share a few data points but don’t overwhelm everyone. Keep it clear, succinct and back it up with a graph or two. If possible, speak up in your cross functional meetings or weekly all hands if you have them.

Closing the loop of experiments is essential; your stakeholders need to know that their input is being listened to and that the experiments that have been run have led to tangible results which can be assessed and prioritised accordingly.

If you were running an A / B test between different pages, this would mean keeping the winning version. If you were testing a new feature on a segment of your audience, this would mean gradually rolling out the feature to the remaining users. If you were testing new pricing models, this would mean taking the plunge and changing the prices across the board.

It’s likely that before you can make a final decision on some of the experiments you’ve been running you’ll need to consult with at least one, if not many of the stakeholders you were engaged with at the beginning. This is not always the case but is for larger scale experiments which impact other parts of your business.

If you’re a plucky startup you can typically go ahead and roll out the better performing page or feature, but in larger organisations closing the loop with stakeholders by articulating what the next steps are is crucial.

You may need additional development work to implement the feature or page you were testing. If this is the case, prioritise the work as you would with any other work. Don’t be tempted to put it to the top of the backlog because you’ve uncovered something which you or your stakeholders personally find exciting or interesting or because it proves a hypothesis you created. Whilst you may have learned a lot from your experiment, that doesn’t mean you have to bypass the rest of your prioritised backlog and get cracking on the work immediately.

Keep an archive / record of your experiment results so that you avoid needlessly repeating the same experiment twice!

Not sure what experiments to run for your product?

Whilst you probably have a team of stakeholders who have ideas galore, sometimes you need a little inspiration to get you started.

Here’s a list of experiment ideas, broken down by product categories to help you come up with your own. These are by no means an exhaustive list of all the possible experiments you could run – they’re just something to get your juices flowing.

| Product type | Experiment ideas | Definition / why it matters |

| API product | API appetite experiment | Before you decide to build an external API, conduct an API appetite experiment to test the appetite for the API you’re considering. Build a landing page which describes the API you’re considering and track the number of sign ups for this. |

| Ecommerce | Checkout flow | Experiment with the stages of the checkout flow. For example, what impact does reducing the payment flow down from 3 steps to 1 step have? |

| Ecommerce | Payment options | Does adding multiple payment options increase conversion? |

| Ecommerce | Email campaign content | Emails drive a significant number of sales for ecommerce companies. Try A/B testing different email nurturing or specific email campaigns to discover which messaging works best. |

| Ecommerce | Delivery options | Delivery is known to be a barrier to purchase on ecommerce sites. Try experimenting with different delivery pricing, offering free delivery over a specific basket value threshold or offering free delivery to every customer to discover how this impacts your conversion rates and average order value. Threshold specific dleivery pricing can increase AOV. |

| Subscription | Pricing | Pricing is a key variable to experiment with in subscription products. Different price points, price bundling and using different price points as anchors can be effective ways to experiment with pricing. |

| Subscription | Retention communication | Preventing churn and retaining customers is crucial in subscription products and email is a tool that is typically used to do this. If your subscriber base is large enough, try experimenting with different messaging in your emails to lapsed customers to prevent them from churning. |

| Subscription | Payment method options | Experiment with adding a new payment options e.g. paypal. This may need to be done on a mocked-up wireframe as actual development would be challenging |

| Subscription | Subscription ‘call to actions’ | Experiment with different CTAs, locations. A simple start could be changing the current CTA copy to more invasive changes that require overall design/ux changes |

| Subscription | Landing pages | Experiment with your subscription landing page e.g. images, further detail, testimonials etc. Importantly, test one variable at time and have one key metric you’re looking to positively influence |

| Lead generation | Sign up form | Lead generation involves generating leads – usually through sign up forms.

Try experimenting with the fields in the form, the placement of the form and the primary CTA you’re using to generate leads. For example, is requiring telephone numbers reducing lead conversion but increasing lead quality? Is placing a sticky form above your content as the user scrolls down improving conversion or putting users off? |

| Lead generation | Marketing channels | How can you generate more leads if your marketing channels are not attracting the right audience? Try experimenting with identical landing pages but different marketing channels to see how this impacts your lead conversion rate. |

| Lead generation | Landing page elements | The elements on the page – the images, forms, headers – these will all contribute to the lead conversion%. Try fundamentally changing the position of the elements on the page to see what impact this has on your conversion % |

| Lead generation | Copy changes | Copy changes can have a profound impact on lead generation. Header text changes, sign up form text changes and paragraph text changes can all impact conversion. Copy length can also be a factor with the traditional advice suggesting that shorter copy length is better than longer copy length. This isn’t always true; often longer, impactful copy length can increase the perceived value of a product. Try experimenting with different copy lengths. |

| Lead generation | CTA button changes | Button color changes by themselves don’t often bring about fundamental shifts but they can give you a little uplift. Try combining color changes with call to action (CTA) changes for maximum effect e.g. changing ‘sign up’ to ‘request information’ can lead to an increase in conversions since the level of commitment appears lower. Semantics do matter. Try experimenting with various words to find words that improve conversion. |

| Lead generation | Value proposition | What is your value proposition? What are you offering potential customers? Conduct an experiment to find out if offering a modified or fundamentally different version of your value proposition might lead to increased customers. For example, if you’re offering a monthly subscription product, conduct an experiment to see how switching to a one off payment model might impact your lead generation. |